I originally wrote PyPNG so that I could manipulate PNG images using Python. My original itch was a tiling application. Since then I’ve been using to develop all sort of little image utilities and investigate PNGs. pipdither is written in Python and the initial version took about an afternoon of fairly lazy coding.

Python is a great language, and now that I’ve got a module for creating PNGs, it’s easy to create other image tools in Python.

Here is a link to an image of Earth taken from the Messenger spacecraft (a 2.1e6 octet file that you won’t be able to read). Messenger was on an Earth flyby on its way to map mercury when it took the image. The image is archived by NASA’s PDS and stored in its IMG format. Which is basically a whole boatload of metadata followed by the image data in row major order at 16-bits per pixel.

Because it’s basically just a bunch of numbers after the metadata, this is really easy to grok using Python. Here it is converted to PNG using PyPNG (this is a small 8-bit version, follow the link to get the full 12-bit version):

It looks rubbish. Someone has turned the brightness up too high on the telly. It looks rubbish for two reasons: a) I embedded a gAMA chunk to specify a gamma of 1.0 (linear); and, b) the camera’s black-level does not correspond to a code of 0. Here’s a histogram:

![]()

Sorry the histogram is a bit rubbish (the image is 12-bit, so the histogram is 4096 pixels wide). But you should be able to see that the first chunk of codes (on the left) are unused. That’s because even though there’s no light falling on the sensor, there’s thermal noise or some residual current drain, or something. So picture black is represented by a code of about 7%. And because I specified a gamma of 1.0 that 7% value in the PNG file actually looks not very dark at all.

Actually I had to fiddle with wordpress quite a lot to get it looking this bad. If left to its own devices wordpress will create its own scaled down version of the image and it THROWS AWAY the gAMA chunk. If your computer ignores the PNG’s gamma chunk then maybe the image doesn’t look to bad to you (a grey level of about 7% in some sort of perceptual space looks fairly black, not actually black, but good enough that you probably won’t tell unless you open a black image next to it).

So clearly to get a good image I should choose some black-level, 7% say, subtract 7% from all the pixel values and rescale to fit the full range. Yeah I could. But that would destroy the original data and impose my own processing on it. What if you wanted to do your own black-level compensation?

I had an idea. I could create an ICC profile that clamps the bottom 7% codes to black, then embed the profile in the PNG. The profile looks like this (in Apple’s ColorSync utility):

Apologies (yet again) for the fact that the screenshot doesn’t fit. WordPress would’ve scaled it, but apparently it falls over when trying to scale a 4-channel PNG. The screenshot has an alpha channel because it’s a shot of a Window and the window has chamfered corners which require alpha. How amusing.

Anyway. Input is on the x-axis, output is on the y-axis. You can see that this TRC curve maps the bottom 7% into 0, and the rest goes linearly.

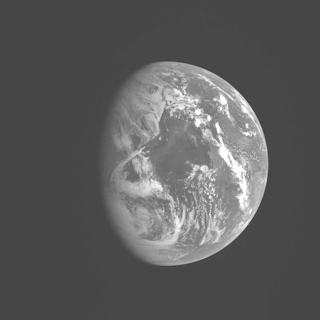

This being the first time I’ve deliberately embedded a colour profile into a PNG image, I’m slightly gobsmacked that it actually works:

You get the best of both worlds. A plausible looking picture for viewing, and all the original image data if you want to dink around with it yourself.

I’m pretty sure that a gamma of 1 is correct, by the way, because the values in the IMG file are raw 12-bit sensor values and they generally respond linearly to light. Actually they’re not quite raw, the onboard computer has compressed the image before it was transmitted to Earth, you can see compression artefacts in the “noise” in the dark regions of the picture (if you look at the version that doesn’t have a colour profile).

PS If these images look the same, your computer isn’t doing colour collection. It sucks. It works on a Mac [ed: if you use Safari]. Try my later article to see a simulation of what it looks like when it works.

2009-05-06 at 16:36:24

My computer doesn’t suck :(

2009-05-06 at 18:12:01

You mean it works on Safari. Firefox does the wrong thing.

I wonder if they’d take a patch for that?

2009-05-06 at 19:27:32

Oh. Is it really the case that Mac’s and SGI’s are the only machines that do colour correction out of the box? Version 2 of the ICC spec has been out 8 years now.

Windows? What does that do? Anyone?

2009-05-06 at 23:25:55

On Windows Vista here:

Only FireFox 3.5b4 and Safari 4 do the right thing, the other browsers show no difference in the images

2009-05-07 at 19:08:34

@Zach: what computer is that? Really I mean, what OS?

2009-05-08 at 08:18:55

They look the same on my firefox 3.0.10 on my mac.

2009-05-08 at 09:20:45

Yes, yes. Firefox sucks, at least until 3.5b4 apparently. If you want to see what I see, see my later article.

2009-05-08 at 12:00:09

From a little more research, is looks like FireFox 3 supports this as well, but not by default. You have to tweak the about:config settings to get it to work. In FF3, it was apparently turned off by default due to the performance penalty it was giving at the time.

reference:

http://www.dria.org/wordpress/archives/2008/04/29/633/

2009-05-08 at 13:14:00

Colour profile support for the win!